projects/experiences

founded nexus neurotech @ stanford

I lead one of Stanford's largest engineering student orgs, organizing hardware & AI-focused makerspace projects, industry collabs with companies like Neuralink and Science, and campus-wide demo days.

undergraduate researcher @ neural prosthetics and translational lab, stanford

For a novel brain-to-voice project, I'm leading GAN vocoder development, and working on deep learning approaches for neural spectrogram prediction and study design.

bare-metal EEG-controlled robotic arm

I love embedded programming, and I coded a simple OS, from scratch, for this project. Here's what I did: 1) On a RISC-V MCU, I built a simple OS (communication protocols, printf, memory management, keyboard protocol, interrupts). 2) I'm building a custom-EEG acquisition system, with a custom-built headset and the OpenBCI Cyton Board. 3) I'm predicting motor intention from this data on Mango Pi via spike detection and sorting, and Kalman filters / GRU neural nets.

lead for neurotech partnerships @ KIMS Hospitals, India

Reporting to the chairman, I scout new neurotechnologies to launch investigational studies at KIMS Hospitals, one of the largest hospital chains in India. I successfully did this for our MRgFUS program — one of first in Asia. I am now looking for BCI partners to deploy their tech into KIMS’ clinical network.

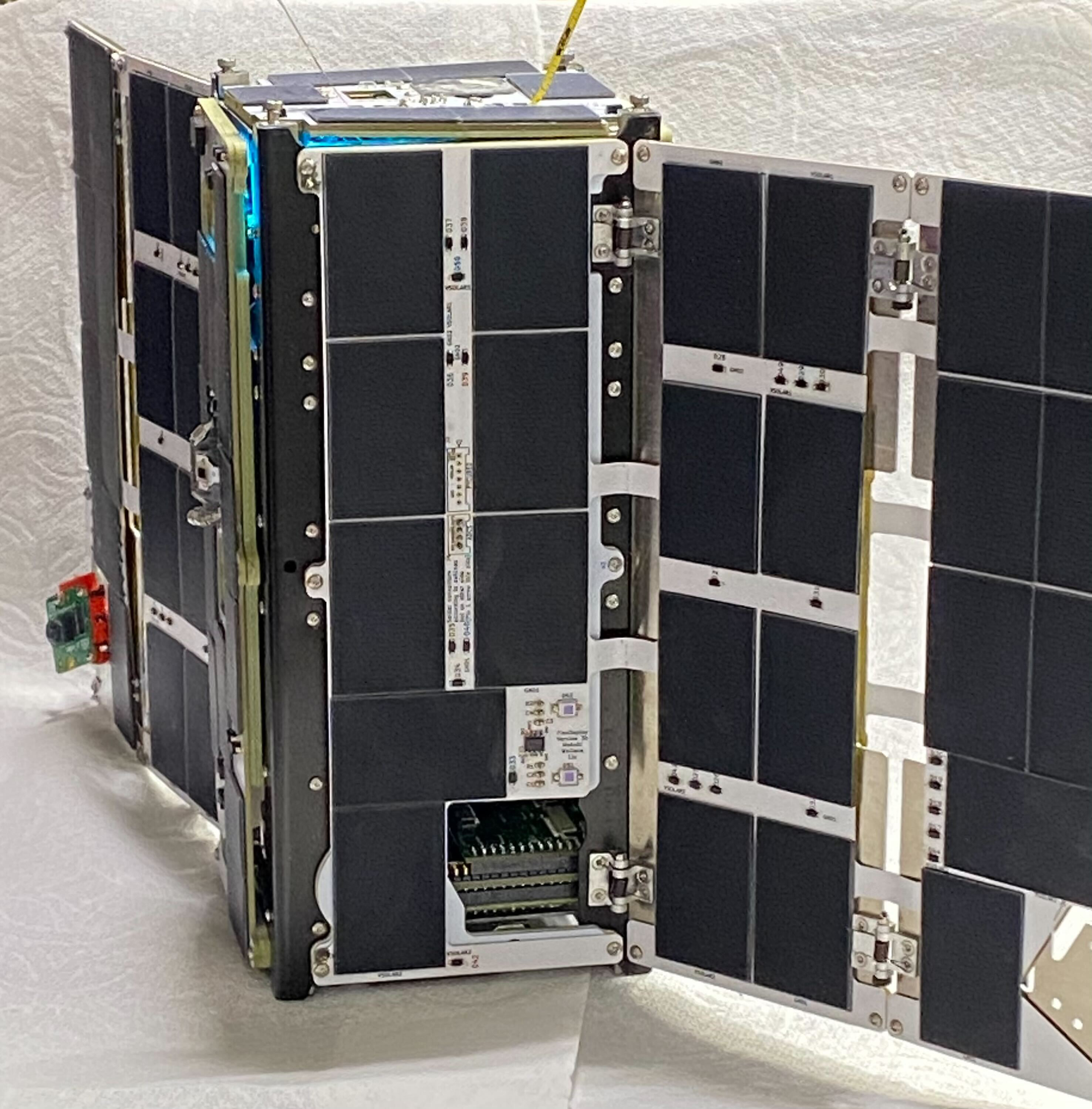

building a satellite @ stanford space initiative

I'm working on a team that is building a cube-sat bound for orbit in the Spring. Here's what I do: 1) embedded sensor integration: develop C drivers for attitude determination and control, integrating sun sensors and magnetometers for orbital orientation 2) control algorithms: implementing Kalman Filters for state estimation and closed-loop control logic to drive actuators from sensor fusion data 3) system architecture & testing: made modular flight software with FSM frameworks; validated software with hardware-in-the-loop testing on custom chips and MCUs.

link to our repo →

here's the satellite!

AI & VR research, virtual human interaction lab @ stanford

I developed a cognitive state monitoring system by integrating EEG, EMG, and eye-tracking with real-time ML (kalman filtering, GRUs) to create an adaptive VR environment. I also designed an underwater VR study with 90 participants to assess sensory conflict in VR users.